Software testing has always been a discipline defined by the complexity of what it needs to test. As applications have moved from desktop to web to mobile to cloud, the testing profession has adapted each time. IoT represents a more fundamental challenge than any of those transitions. You are no longer testing a single application running on a predictable platform. You are testing a distributed system of physical devices, communication protocols, firmware, cloud backends, and user-facing interfaces — all of which must work together reliably in environments that testers cannot fully control.

In 2026, the IoT ecosystem has grown well beyond the connected thermostats and smart speakers that defined its early public image. Industrial sensors, medical wearables, connected vehicles, smart city infrastructure, and edge computing deployments have brought IoT into domains where failure has serious consequences. Testing these systems demands a specific and evolving set of skills, strategies, and tools. This post examines the state of IoT testing today: why it is complex, where the hardest problems lie, and how teams are approaching them.

What IoT Testing Actually Involves

IoT testing is not a single activity — it is a collection of testing disciplines applied across an unusually heterogeneous technology stack. At its core, IoT testing validates that devices, networks, and software systems work together as intended. That means testing the firmware running on the device, the communication protocols the device uses to send and receive data, the cloud or edge services processing that data, the mobile or web applications that users interact with, and the integrations connecting all of these layers.

What makes this genuinely difficult is that each layer introduces its own failure modes, and failures often emerge at the intersections. A device that works perfectly on a strong WiFi signal may behave unpredictably on a congested network. Firmware that passes unit tests may expose vulnerabilities only when combined with a specific hardware revision. The complexity compounds quickly, and standard QA approaches — write tests, run them in a controlled environment, ship — need significant adaptation to work in this context.

The Expanded IoT Ecosystem in 2026

The consumer IoT landscape has matured considerably. The Matter protocol, developed by the Connectivity Standards Alliance with backing from Apple, Google, Amazon, and major device manufacturers, has become the dominant standard for smart home device interoperability. A Matter-certified device can, in theory, work with Apple HomeKit, Google Home, and Amazon Alexa simultaneously. In practice, testing that interoperability across ecosystems — accounting for firmware differences, hub firmware versions, and cloud service variations — remains a significant challenge.

Beyond smart home, the ecosystem now spans wearables with medical-grade sensor arrays, healthcare monitoring devices subject to regulatory validation requirements, smart city deployments covering traffic management and utility metering, and connected vehicles running over-the-air update mechanisms that must never fail mid-update. Industrial IoT, or IIoT, has seen particularly rapid growth, with manufacturing, energy, and logistics sectors deploying sensor networks that feed real-time data into operational systems. In these environments, a device failure or data integrity issue is not an inconvenience — it has direct operational and safety implications.

Device Heterogeneity and Protocol Diversity

One of the defining challenges of IoT testing is the sheer variety of hardware and communication standards involved. A single product line might include devices running on different chipsets, with different memory constraints, communicating over WiFi, Zigbee, Z-Wave, Thread, Cellular (NB-IoT, LTE-M, 5G), or LoRaWAN depending on the use case. Each protocol has different range, bandwidth, power consumption, and reliability characteristics, and each introduces its own testing surface.

Thread, for example, is a mesh networking protocol designed for low-power devices in the home — it is the underlying transport for Matter. Testing Thread mesh behaviour means validating how devices route around each other, what happens when a router node drops from the mesh, and how the network recovers. LoRaWAN, used in wide-area IoT deployments for smart metering and environmental monitoring, presents different challenges: very low bandwidth, long range, and a need to validate device behaviour over hours or days of sparse communication. No single testing tool handles all of these, and teams need protocol-specific expertise as well as general IoT testing capabilities.

Intermittent Connectivity and Resource Constraints

Real-world IoT devices operate in network environments that testers cannot perfectly replicate in a lab. Connectivity is intermittent. Signal quality fluctuates. A device may lose its connection to the cloud for seconds or minutes and must handle that gracefully — queuing data locally, resuming synchronisation without loss, and recovering to a known state. Testing these failure scenarios requires deliberate network simulation: throttling bandwidth, introducing packet loss, simulating complete disconnection and reconnection cycles.

Resource constraints add another layer of complexity. Many IoT devices run on microcontrollers with very limited CPU cycles and memory — often measured in kilobytes, not gigabytes. Firmware must be lean, and testing must account for the possibility that memory leaks or inefficient processing will only manifest after days of continuous operation. Long-duration soak testing, running devices for 72 or 96 hours under representative workloads while monitoring memory usage and performance, is not optional — it is how teams catch the failure modes that short test cycles miss.

Edge Computing and Its Testing Implications

Edge computing has shifted a meaningful portion of application logic from the cloud to devices or local gateways. This changes what needs to be tested and where. An application that previously processed all sensor data in a centralised cloud service may now run inference models on a local edge device, sending only summarised results upstream. Testing the on-device logic — its accuracy, its performance under the device’s resource constraints, its behaviour when the upstream connection is unavailable — requires access to the physical hardware or a sufficiently faithful emulator.

Latency-sensitive scenarios are particularly important in edge deployments. Industrial control systems may require sub-100ms response times for feedback loops that would be impossible with round-trip cloud latency. Testing that these latency requirements are met under realistic conditions — with representative data volumes, concurrent processes, and network load — is a distinct challenge from conventional application performance testing. The testing environment must reflect the actual edge deployment topology, not a simplified approximation of it.

IoT Security Testing

IoT security has been a persistent weakness in the industry, and the attack surface continues to expand. The OWASP IoT Top 10 identifies the most critical risk categories: weak, guessable, or hardcoded passwords; insecure network services; insecure ecosystem interfaces; lack of secure update mechanisms; use of insecure or outdated components; insufficient privacy protection; insecure data transfer and storage; lack of device management; insecure default settings; and lack of physical hardening.

Security testing for IoT devices must address all of these. Firmware analysis — extracting firmware images and examining them for hardcoded credentials, known vulnerable libraries, and insecure configurations — is a foundational activity. Network traffic analysis, using tools to capture and inspect the communications between a device and its backend services, reveals whether data is encrypted in transit and whether authentication mechanisms are robust. Testing the update mechanism is critical: an insecure over-the-air update process is an attacker’s path to compromising every device in a deployed fleet.

Default credential testing — verifying that devices do not ship with known-default administrative credentials that users are unlikely to change — remains relevant despite years of industry attention. Many consumer devices still fail this basic check. For industrial and healthcare deployments, where regulatory requirements add additional security obligations, IoT security testing must be systematic, documented, and repeatable.

Performance, Reliability, and Battery Life Testing

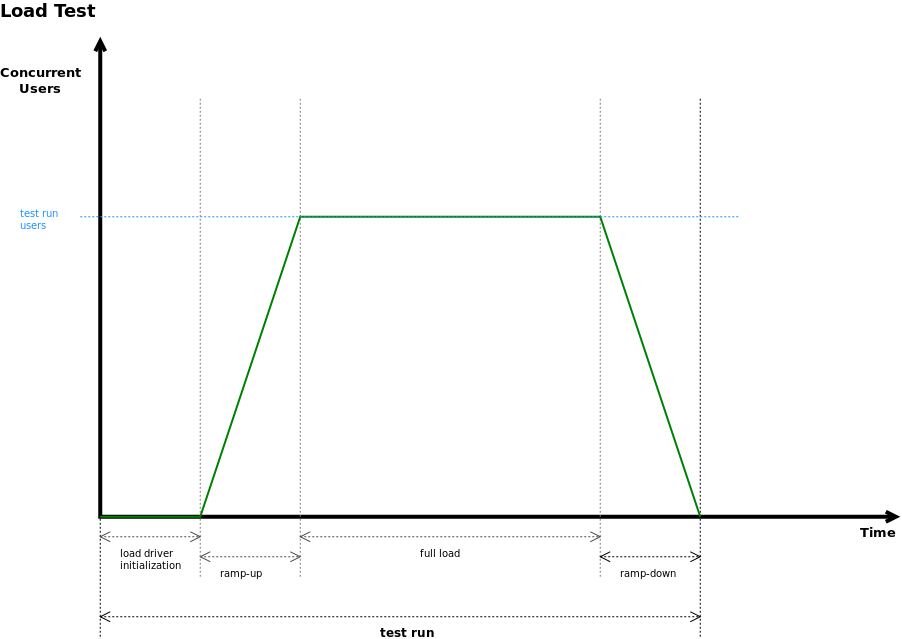

Performance testing for IoT differs from web or mobile performance testing in important ways. The relevant metrics include battery life, data transmission efficiency, wake-up latency from low-power sleep states, and the time required for a device to process and respond to incoming commands. For battery-powered devices, even small inefficiencies in power management can translate to weeks of difference in device lifetime — which is a user-facing quality issue with real commercial consequences.

Reliability testing at scale is another challenge unique to IoT. A cloud service that handles a million concurrent users is a well-understood scaling problem. A fleet of a million physical devices, each generating telemetry on its own schedule, with varying firmware versions, experiencing real-world environmental variations, presents a different kind of reliability question. Backend services must handle the full variability of what a real device fleet produces — not just the clean, well-formed messages a test harness sends.

Automation Challenges in IoT Testing

Automating IoT tests is harder than automating web or API tests because the system under test includes physical hardware. You cannot fully spin up an IoT test environment in a Docker container. Real device testing requires physical device labs — racks of hardware that can be remotely managed, reset, and monitored. Maintaining such labs at the scale needed for comprehensive coverage is expensive, and the logistics of keeping firmware in sync across hundreds of physical units are non-trivial.

Simulation and emulation offer a partial solution. Tools like QEMU can emulate certain microcontroller architectures, and some manufacturers provide device simulators for their platforms. Cloud IoT services — AWS IoT Core, Azure IoT Hub, Google Cloud IoT — offer device simulators for testing the cloud integration layer without physical hardware. These approaches reduce the reliance on physical devices for functional and integration testing, reserving the real hardware for scenarios that specifically require it: hardware-specific behaviour, power consumption measurement, radio frequency testing, and long-duration reliability runs.

Test orchestration in IoT environments also requires careful design. Tests must account for device boot time, network join procedures, and the asynchronous nature of device communication. A test that sends a command to a device and expects an immediate synchronous response will not work — IoT testing frameworks must be built around event-driven, eventually-consistent interaction models that reflect how these systems actually operate.

VTEST and IoT Testing

At VTEST, we have built IoT testing practices that address the full complexity of these systems — from firmware validation and protocol testing through to backend performance and security assessment. Our engineers understand that IoT quality cannot be afterthought validation; it must be designed into the development process from the beginning, with testing strategies that match the architecture of the system being built. Whether you are deploying a consumer smart home product, an industrial sensor network, or a healthcare monitoring device, VTEST brings the domain expertise and technical depth to give you confidence in what you are shipping. Contact us to discuss your IoT testing challenges.

Akbar Shaikh — CTO, VTEST

Akbar is the CTO at VTEST and an AI evangelist driving the integration of intelligent technologies into software quality assurance. He architects AI-powered testing solutions for enterprise clients worldwide.