All the youthful activities in today’s world are driven by technology. May it be dating culture, newer innovative business startups, delivery applications… The list can go on. If we look closely, we will observe that the face of all these forces of today’s digital world is software. Though it can be seen and used in various formats like websites, applications, etc., it is the single most impactful and game-changing piece of technology in the current era.

A software, like any other product, is developed to meet a predetermined goal. The purpose of software testing, in simple terms, is to find out whether the software meets that particular goal, to assess its strengths and weaknesses, areas of improvement.

Software testing is an independent activity that determines whether your software is ready to be launched in the target market. Some of the common characteristics tested include its design, development, response to all kinds of inputs, execution of the desired functions within the right time-frame, and overall efficiency.

Software testing can be performed at different stages of the Software Development Life Cycle (SDLC), as per the choice of the developer. However, it’s advisable to perform it as early as possible, as it significantly saves resources. If not, you could do it as soon as the most basic prototype is developed.

The software testing process usually includes these steps:

- Planning – Plan the whole process systematically.

- Analysis and Design – Analyze and design proper test cases to execute while testing.

- Implementation and execution – Implement and Execute the test cases to find out the bugs/errors.

- Evaluation and reporting – Evaluate the whole testing result mainly comprising of all the bugs found and draft a report based on the evaluation.

- Test closure – Finish the process by sending the report to the developers’ team.

One should note that this process should be repeated certain times to finalize the testing process before the release of the application or software.

Every company is keen to get its software tested in a thorough, comprehensive manner to find out and fix all the glitches. However, it’s not easy to know how far you’ve come towards your goal. There’s always scope for improvement. Here are some general guidelines:

- Define a process

Before going ahead with actual testing, it certainly helps to define a clear-cut, expert-approved testingprocess. Instead of being strictly followed, this can act as a guideline. We can make this process as a baseline and tweak it as we go on developing it. But a process is only as good as it’s executed, so it should be designed with a practical approach.

- Involve testers in the development

If testers are involved from the beginning throughout the process, they can get a good, close look at the product, which helps them devise a comprehensive testing process.It also helps find out and fix glitches early on, saving resources. The more they know and understand, the more they can contribute, it’s that simple.

- Maintain records

In your day-to-day life, you store all the important documents you need in your cupboard. You store food in the fridge. Why do you do it? It’s because storing these things helps you do your activities in the future more smoothly. Just like this, it’s highly useful to store all the relevant information related to a product, from its testing plan to its product details, in the form of documents, reports in a separate folder, as it becomes easier for us to access it. This way, we don’t have any last-minute regrets, and helps us streamline our testing operation.

- Have Prototypes ready in advance

It’s safe to have prototypes ready in advance for the fourth stage. This helps improve the productivity of the developer as well as the tester. It saves time. It can also help the testers find out if the requirements mentioned for the product can be tested. It’s pertinent, however, that these prototypes; test cases are easily understood by the tester.

- Be Critical

The job of a tester, any tester, is to independently evaluate the product they’re testing. It’s important to be unbiased before, while, and after testing. One can’t assume that software is perfect regardless of how good the developer is. Start by thinking that there are glitches, and then rule them out step by step. If you’re looking for glitches, you’ll find glitches, which helps us fix them. That should be the aim.

- Divide the product into parts

The testing team can divide the entire product module into smaller parts. This ensures that even the smallest aspect of a particular application doesn’t remain unnoticed. The division of the entire logic into smaller modules makes it simple to identify. Any glitches that come up are easy to spot and fix. Additionally, doing this saves time, contrary to what we might think.

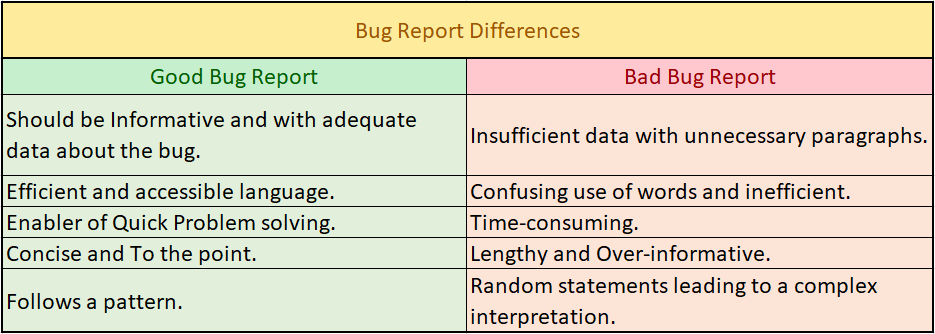

- A Comprehensive Testing Report

A clear and detailed bug report is a must if you want to deliver a flawless product. Apart from leading the developers to the bugs and helping them fix them, it saves valuable time. The report should be clear and understandable to the developers and the testers themselves, to work together to deliver the product.Check out our blog on how to draft a good bug report to get a clearer picture.

- Create a good test environment

The testing team must test a product in a simulated environment the same as the one it’s intended to be used in after it’s launched. This is to ensure that there are no glitches or bugs that are missed out during the product’s testing.

Ex. If a developer has added certain configurations or run scripts but has missed adding the same in release document or configuration file. The testers may not be able to thoroughly test the product, resulting in some glitches in the end.

- Developer-tester coordination

This is the most important, yet the most difficult point to realize. Any great product is a combined effort of the testing team and the developing team. Coordination between these two teams (Ex. discussing a testing report, brainstorming ways to fix the glitches) pays off. It is equally important to keep an e-trail of all such discussions so that you can refer back to them, just in the case.

Conclusion

As discussed earlier, Software Development is not a flick that can be ignored. It is here to change the game. If we need to go ahead into a brighter and smarter future, Software Development is the path on which we should walk.

That’s why software testing is a must. It is like repairing the road to the future. If we do not remove these bumps called bugs on this road of coding, we as humans might fail in the long run.

Software testing is a necessary activity before its launch. What we need is a well-thought-out plan, a critical approach, and a nice working relationship between the testing team and the developing team, who are committed to delivering a flawless product. Simple enough to understand, it’s not so easy to realize.

How VTEST can help?

We at VTEST strive to excel. With our tea of intelligent, young, and dynamic testers, we are changing the game of software testing. We understand how important it is to streamline a testing process, to stick to it without losing the main purpose of testing. Our experts can work well with developing teams to deliver an error-free product.

VTEST it!

Shak Hanjgikar — Founder & CEO, VTEST

Shak has 17+ years of end-to-end software testing experience across the US, UK, and India. He founded VTEST and has built QA practices for enterprises across multiple domains, mentoring 100+ testers throughout his career.