Penetration testing is one of the most misunderstood practices in cybersecurity. Many organisations treat it as a checkbox exercise — something done once a year to satisfy an auditor — without understanding what a well-executed penetration test can and cannot tell them. In 2026, with the threat landscape evolving faster than most security programmes can track, that misunderstanding is increasingly costly. This guide provides a complete, technically grounded overview of penetration testing: what it is, why it matters, how it works, and how to use it effectively as part of a broader security programme.

What Is Penetration Testing?

Penetration testing — commonly shortened to pen testing — is an authorised, simulated cyberattack conducted against a defined target system, network, or application by security professionals. The objective is to identify and exploit vulnerabilities before malicious actors do, and to document the findings with sufficient detail for remediation.

The key distinction from other security assessments is exploitation. A penetration test does not merely scan for known vulnerability signatures — it attempts to actually exploit discovered weaknesses to determine whether they can be chained together to achieve meaningful impact: data exfiltration, privilege escalation, lateral movement, or service disruption. This exploitation step is what separates penetration testing from vulnerability scanning and gives it its superior signal quality.

Why Penetration Testing Is Critical in 2026

The threat environment facing organisations in 2026 is materially different from five years ago, and several developments have elevated the importance of regular, high-quality penetration testing.

Supply Chain Attacks

The compromise of software supply chains — third-party libraries, build pipelines, CI/CD tooling, and managed service providers — has become one of the dominant attack vectors. Supply chain attacks are difficult to detect with perimeter-focused security controls because malicious code enters through trusted channels. Penetration testing that covers software composition, dependency integrity, and third-party integrations is increasingly essential.

AI-Powered Threats

Adversaries are using large language models to accelerate reconnaissance, generate phishing content at scale, automate vulnerability discovery, and write exploit code. The speed and volume of AI-assisted attacks have increased substantially. Organisations can no longer rely on the assumption that they are an unlikely target or that manual attack methods will give them adequate warning time.

Regulatory Requirements

Penetration testing is now explicitly required or strongly implied by a growing set of regulatory frameworks:

- PCI-DSS v4.0 requires penetration testing of the cardholder data environment at least annually and after significant infrastructure or application changes.

- ISO 27001:2022 includes controls that require organisations to test the effectiveness of their security controls, with penetration testing as the primary mechanism for technical controls.

- SOC 2 Type II auditors increasingly expect evidence of penetration testing as part of the availability, confidentiality, and security trust service criteria.

- GDPR requires organisations to implement appropriate technical measures to protect personal data, and regulators have cited the absence of penetration testing in enforcement actions following breaches.

- DORA (Digital Operational Resilience Act), effective from January 2025 for EU financial entities, mandates threat-led penetration testing for significant institutions.

Types of Penetration Testing

Network and Infrastructure Penetration Testing

Tests external and internal network infrastructure: firewalls, routers, switches, VPNs, and exposed services. External network pen tests simulate an attacker with no prior access attempting to breach the network perimeter. Internal network pen tests simulate a threat actor who has already obtained a foothold inside the network — modelling insider threats or post-phishing scenarios — and attempt to escalate privileges and move laterally.

Web Application Penetration Testing

Targets web applications for vulnerabilities across authentication, authorisation, input handling, session management, business logic, and API endpoints. Web application pen testing is the most common engagement type and typically follows the OWASP Testing Guide methodology. Given that web applications are the primary attack surface for most businesses, this is usually the highest-priority pen test engagement.

Mobile Application Penetration Testing

Assesses iOS and Android applications for client-side vulnerabilities: insecure data storage, improper certificate validation, exported components, runtime tampering, binary protections, and API communication security. Mobile pen testing requires device-level access and specialised tools. The OWASP Mobile Application Security Verification Standard (MASVS) provides the framework.

API Penetration Testing

With modern architectures increasingly API-first, dedicated API pen testing has become essential. API pen tests target REST, GraphQL, and gRPC interfaces for broken object-level authorisation (BOLA), broken function-level authorisation, mass assignment, rate limiting bypass, injection vulnerabilities, and insecure direct object references. The OWASP API Security Top 10 is the primary reference framework for this test type.

Social Engineering

Tests the human layer of the security stack: phishing simulations, vishing (voice phishing), pretexting, and physical tailgating. Social engineering engagements assess employee security awareness and the effectiveness of security training programmes. They are often conducted alongside technical pen tests as part of a comprehensive assessment.

Cloud Infrastructure Penetration Testing

Assesses cloud environments (AWS, Azure, GCP) for misconfigurations, overly permissive IAM policies, exposed storage buckets, insecure serverless functions, and inadequate network segmentation. Cloud pen testing requires specific expertise in cloud-native architectures and must operate within the acceptable use policies of cloud providers. Misconfigurations are the dominant category of finding in cloud environments.

Physical Penetration Testing

Attempts to gain unauthorised physical access to facilities, server rooms, network equipment, or workstations. Physical pen tests are less common but critically important for organisations where physical security controls are part of the compliance scope (data centres, financial branches, healthcare facilities).

Black Box, White Box, and Grey Box Testing

These terms describe the level of prior knowledge granted to the pen tester at the start of the engagement:

- Black box: The tester begins with no prior knowledge of the target environment — no architecture diagrams, no source code, no credentials. This most closely simulates an external attacker with no inside information. Black box testing is valuable for assessing what an opportunistic attacker can achieve but is less efficient at finding deep application-layer vulnerabilities.

- White box: The tester is provided full documentation: architecture diagrams, source code, credentials, infrastructure configurations. White box testing is the most thorough approach because the tester can audit the entire attack surface with context that an attacker would typically not have. It is best suited for finding the broadest and deepest set of vulnerabilities within a defined timeframe.

- Grey box: The tester is given partial information — typically user-level credentials, some application documentation, or general architecture context — representing a scenario where an attacker has obtained limited inside information (a compromised user account, for example). Grey box is the most common engagement type as it balances realism with thoroughness.

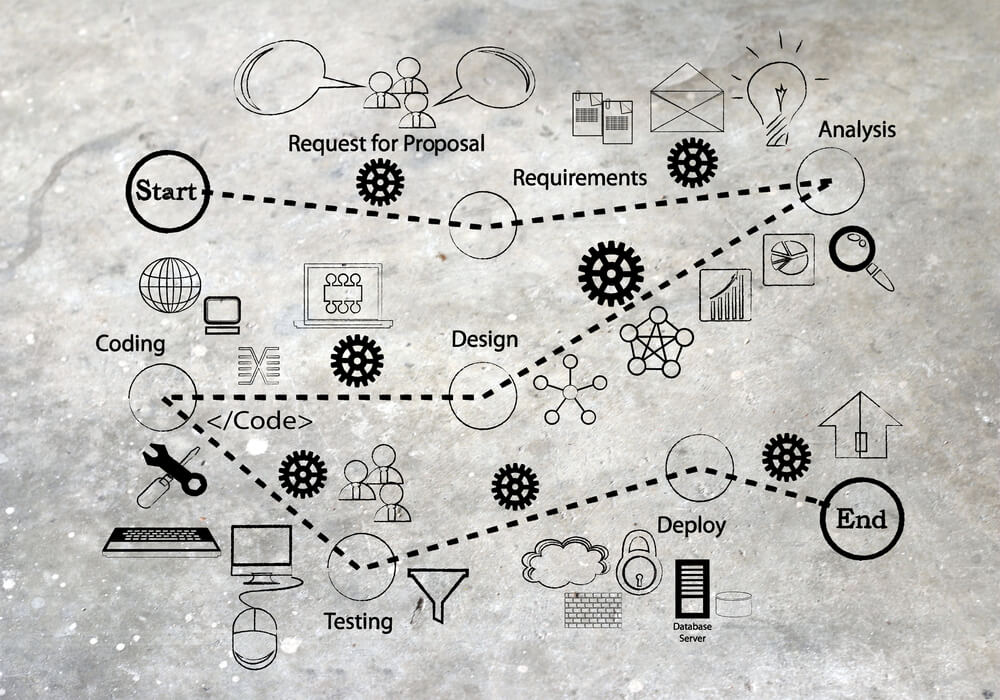

The Penetration Testing Process

Scoping

Before a single packet is sent, the engagement scope must be defined and documented in a written Rules of Engagement (RoE) document. Scoping defines: which systems, IP ranges, and applications are in scope; which are explicitly out of scope; what test techniques are permitted and prohibited; testing windows (to avoid disrupting production systems during peak hours); emergency contact procedures; and what constitutes a successful critical finding requiring immediate notification. Inadequate scoping is the leading cause of pen test engagements that fail to produce actionable results.

Reconnaissance

Passive and active information gathering about the target. Passive reconnaissance uses open-source intelligence (OSINT) techniques to collect publicly available information: DNS records, WHOIS data, SSL certificate transparency logs, LinkedIn employee profiles, GitHub repositories, Shodan data, and archived web content. Active reconnaissance involves direct interaction with the target — DNS enumeration, web crawling, service banner grabbing. Reconnaissance informs the attack strategy for all subsequent phases.

Scanning and Enumeration

Systematic identification of live hosts, open ports, running services, service versions, and operating system fingerprints. Vulnerability scanners identify known CVEs associated with discovered service versions. Enumeration goes deeper — extracting user accounts, shares, service configurations, and application structure that informs targeted exploitation attempts. This phase transforms reconnaissance data into a prioritised attack surface map.

Exploitation

Attempting to exploit discovered vulnerabilities to achieve a defined objective: gaining initial access, bypassing authentication, extracting data, or executing arbitrary code. Exploitation is the phase that distinguishes pen testing from scanning. Not all vulnerabilities identified by scanners are exploitable in the actual environment — exploitation confirms real-world risk and eliminates false positives that waste remediation effort.

Post-Exploitation

Following initial compromise, post-exploitation activities determine what an attacker could achieve with that foothold: escalating local privileges to administrator or root, harvesting credentials from memory or configuration files, moving laterally to adjacent systems, maintaining persistence through backdoors or scheduled tasks, and reaching high-value targets (domain controllers, databases, source code repositories). Post-exploitation demonstrates the full business impact of a successful initial compromise — often dramatically more severe than the entry point vulnerability alone would suggest.

Reporting

The deliverable that justifies the entire engagement. A high-quality penetration test report includes an executive summary suitable for non-technical stakeholders (scope, overall risk assessment, critical findings summary), a technical findings section with each vulnerability documented by title, severity rating (CVSS score), affected component, description, evidence (screenshots, request/response captures), business impact, and specific remediation guidance. Findings should be prioritised by exploitability and business impact, not just CVSS score. A report that lists vulnerabilities without prioritisation or remediation guidance transfers the analytical work back to the client and reduces the value of the engagement.

OWASP Top 10 as a Framework

The OWASP Top 10 is the most widely referenced framework for web application security risk. The 2021 edition remains the current published standard; an updated version reflecting 2024-2025 threat data is in progress. The 2021 Top 10 categories are:

- Broken Access Control

- Cryptographic Failures

- Injection (including SQL injection, command injection, and LDAP injection)

- Insecure Design

- Security Misconfiguration

- Vulnerable and Outdated Components

- Identification and Authentication Failures

- Software and Data Integrity Failures

- Security Logging and Monitoring Failures

- Server-Side Request Forgery (SSRF)

Broken Access Control has held the top position since 2021, reflecting the persistent failure of organisations to implement least-privilege access controls correctly. Every web application pen test should verify coverage against all 10 categories as a minimum baseline. The OWASP Testing Guide provides specific test cases for each category.

Modern Penetration Testing Tools

Metasploit Framework

The most widely used exploitation framework, maintained by Rapid7. Metasploit provides a library of exploit modules, payloads, and post-exploitation tools that can be composed to execute attack chains efficiently. It is used in both manual engagements and as a component of automated attack simulation. The commercial Metasploit Pro version adds workflow automation and reporting features.

Burp Suite Pro

The standard tool for web application and API penetration testing. Burp Suite Pro provides an intercepting proxy, scanner, repeater, intruder (for parameter fuzzing and brute force), sequencer (for session token entropy analysis), and decoder. Its BApp Store extension ecosystem significantly extends its capability for specific test types. For web application pen testing, Burp Suite Pro is effectively mandatory.

OWASP ZAP

The open-source alternative to Burp Suite, maintained by the OWASP Foundation. ZAP is a strong choice for organisations that need automated scanning capability in CI/CD pipelines (via the ZAP API and Docker images) without commercial licensing costs. ZAP’s active scan capability is less sophisticated than Burp Suite Pro for manual engagements but is production-ready for automated baseline scanning.

Nmap

The definitive network scanning and host discovery tool. Nmap’s scripting engine (NSE) extends basic port scanning to service version detection, vulnerability identification, and protocol-specific enumeration. Despite being over 25 years old, Nmap remains the first tool used in almost every network penetration test.

Nikto

A web server scanner that checks for known vulnerabilities, misconfigured HTTP headers, default credentials, outdated software versions, and dangerous HTTP methods. Nikto is not subtle — it generates significant log traffic and is easily detected — but it is fast and identifies a broad range of common issues quickly during initial scanning phases.

Kali Linux

The standard operating system for penetration testing. Kali Linux bundles hundreds of security tools — including Metasploit, Burp Suite, Nmap, Nikto, Wireshark, John the Ripper, Hashcat, and aircrack-ng — in a pre-configured, regularly updated environment. Most professional penetration testers work from Kali Linux (or a comparable distribution like Parrot OS) as their primary operating environment.

Nuclei

A fast, template-based vulnerability scanner developed by ProjectDiscovery. Nuclei’s community-maintained template library covers thousands of CVEs, misconfigurations, exposed panels, and technology-specific vulnerabilities. Its speed and template extensibility have made it a standard component of modern attack surface management and pen testing workflows, particularly for initial reconnaissance and bulk scanning phases.

AI-Powered Penetration Testing

AI is beginning to materially accelerate multiple phases of penetration testing. The impact is already visible in current tooling and practice:

- Automated vulnerability discovery: AI models fine-tuned on vulnerability databases and code patterns can identify security weaknesses in source code and running applications faster than manual review, flagging candidates for manual exploitation confirmation.

- Attack path generation: Given a network topology and discovered vulnerabilities, AI systems can enumerate potential attack paths to high-value targets, helping pen testers prioritise exploitation sequences.

- Intelligent fuzzing: AI-guided fuzzing uses coverage feedback and learned input patterns to generate test inputs that exercise deeper code paths than traditional random or mutation-based fuzzing, increasing the probability of discovering memory corruption and injection vulnerabilities.

- Adversary simulation: Commercial platforms (including Pentera and Horizon3.ai) use AI to automate continuous penetration testing, running attack simulations against production environments on a scheduled basis to provide continuous security validation between manual pen test engagements.

AI does not replace skilled human penetration testers — particularly for complex business logic flaws, chained attack scenarios, and social engineering — but it is raising the baseline capability of automated tools and reducing the time required to cover known vulnerability classes.

Manual vs Automated Penetration Testing

Automated scanners and AI-assisted tools can identify known vulnerability patterns at speed and scale. Manual penetration testing discovers the vulnerabilities that automated tools cannot find: business logic flaws (where the application behaves correctly from a technical standpoint but can be abused by understanding the intended workflow), complex authentication bypass chains, race conditions, and logic errors in multi-step processes.

The practical answer is that both are necessary. Automated scanning provides broad, fast baseline coverage and should be integrated into CI/CD pipelines. Manual penetration testing provides depth, creativity, and the adversarial thinking that finds the vulnerabilities most likely to be exploited by real attackers. Relying exclusively on automated scanning gives false confidence; relying exclusively on manual testing is inefficient and leaves known vulnerability classes unchecked between engagements.

Penetration Testing vs Vulnerability Scanning

These terms are frequently conflated, to the detriment of security programmes that budget for one expecting the other. The distinction is fundamental:

- Vulnerability scanning is automated, non-exploitative, and produces a list of potential vulnerabilities based on signature matching and version checks. It is fast, repeatable, and scalable. It cannot confirm exploitability, does not chain vulnerabilities together, and cannot assess business logic. False positive rates are high.

- Penetration testing involves human expertise, attempts actual exploitation, chains vulnerabilities to demonstrate real-world attack paths, and assesses business impact. It confirms which vulnerabilities are genuinely exploitable in the specific environment and provides findings that carry the weight of demonstrated evidence rather than theoretical possibility.

Vulnerability scanning is a continuous operational activity. Penetration testing is a periodic, in-depth assessment. Both have a place in a mature security programme, but they are not interchangeable.

How Often Should You Penetration Test?

Minimum frequency is often dictated by compliance requirements (PCI-DSS: annually; after significant changes). But compliance minimums are not the same as adequate security practice. Practical guidance:

- Conduct a full-scope penetration test at least annually for any internet-facing application or infrastructure handling sensitive data

- Conduct targeted pen tests after significant architectural changes, new major feature releases, cloud migrations, or M&A activities that introduce new systems

- Run continuous automated attack simulation between manual engagements for high-risk environments

- Conduct phishing simulations quarterly for organisations where social engineering is a significant threat vector

The frequency question should ultimately be driven by threat model, not by the minimum needed to satisfy an auditor. An organisation that processes financial transactions or holds significant personal data and tests only annually is leaving a large window of undetected exposure.

VTEST’s Penetration Testing Services

VTEST provides structured penetration testing engagements conducted by certified security professionals. Our engagements follow a documented methodology aligned with OWASP, PTES (Penetration Testing Execution Standard), and NIST SP 800-115, scoped precisely to client requirements and delivered with reports that are useful to both technical and executive audiences.

We conduct web application, API, mobile, and network penetration tests for clients across financial services, healthcare, SaaS, and enterprise software. Every engagement includes a pre-test scoping call, a written Rules of Engagement document, a detailed findings report with CVSS-rated vulnerabilities and specific remediation guidance, and a retest of remediated findings to confirm closure. If you are planning an upcoming pen test, reviewing your compliance obligations, or have concerns about specific areas of your attack surface, contact VTEST to discuss scope and approach.

Further Reading

Related Guides

Namrata Shinde — Functional Testing Expert, VTEST

Namrata is a Functional Testing Expert at VTEST with deep experience in mobile, UI, and end-to-end testing. She ensures every release is thoroughly validated and bulletproof before reaching end users.